I noticed a couple "Warning" errors with my most recent scrapes. Here are the corresponding log entries:

2026-03-17 18:30:18,699 INFO app.worker: [roku] Scrape job started

2026-03-17 18:30:19,305 WARNING app.scrapers.roku: [roku] prewarm seed: could not seed osm_session — no channel succeeded

2026-03-17 18:30:19,305 INFO app.scrapers.roku: [roku] cache warm summary: play_id=0/0 selector=0/0 stream_url=0/0 retry_play=0 retry_selector=0

2026-03-17 18:30:19,305 INFO app.scrapers.roku: [roku] 0 EPG entries fetched for 0 channels

2026-03-17 18:30:19,751 INFO app.worker: [roku] EPG-only run complete — 0 channels, 0 programs (1.1s)

2026-03-17 18:31:26,706 INFO app.worker: [plex] Scrape job started

2026-03-17 18:32:08,623 WARNING urllib3.connectionpool: Retrying (Retry(total=2, connect=3, read=1, redirect=None, status=2)) after connection broken by 'ReadTimeoutError("HTTPSConnectionPool(host='epg.provider.plex.tv', port=443): Read timed out. (read timeout=30)")': /grid?beginningAt=1773826286&endingAt=1773855086

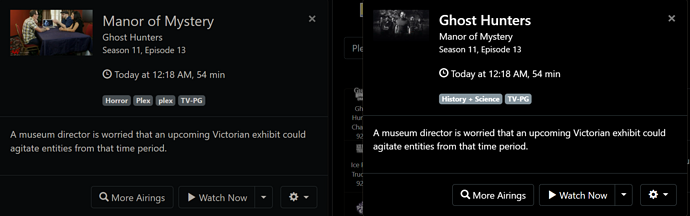

2026-03-17 18:33:30,941 INFO app.scrapers.plex: [plex] 5676 EPG entries fetched from grid API

2026-03-17 18:33:31,101 INFO app.worker: [plex] preserved 2 existing EPG rows across 2 channels with no now coverage (sample channel_ids=818,339)

2026-03-17 18:33:31,678 INFO app.worker: [plex] EPG-only run complete — 678 channels, 5676 programs (125.0s)