I disagree. As other solutions take maybe 20 secs or so on the same hardware to fully initialize and only peak cpu for very brief time. They also only take up less than 200MB or RAM. This, pushes over 1GB easy (so says Portainer info). My issue with the pi may have been ram limited as it was the 1gb model and there was the overhead of the linux os i had on it as well. But dirt poor performance on the i9 12th gen, is not good. I am not gonna load it up on my older mini pc that is my main Docker setup that is a 2c/4t i7 Intel 7th gen NUC.

This software, i understand, is not the same as the single FAST provider dockers out there...this one has multiple, thus, i get it has a much heavier workload and que of things to do.

But, having to wait several minutes, just to bring up the admin UI, even at first run, seems a bit clunky.

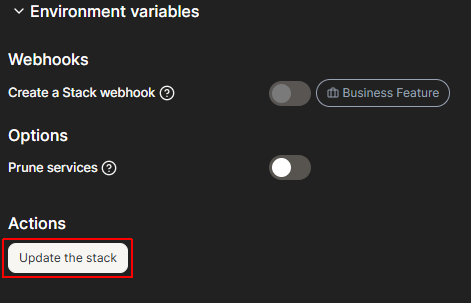

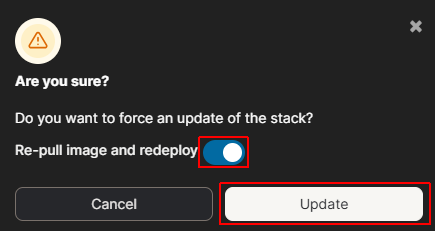

Even portainer is hanging on "deploying stack" for a while as things fire up.

I am just saying, there may be a more refined way to do it that is worth considering at some point.

To lessen the initial load and work flow.

Maybe not have it go all out guns blazing first startup, and just give a fast initial startup to get the admin Ui up and then give the user options there to trigger a full initial scrape of everything, or custom initialization, let user pick which sources they want to enable/disable first, then run initialization scrapes.

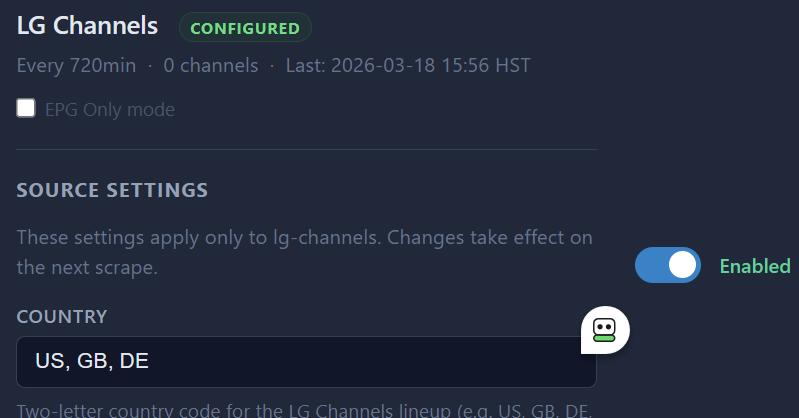

I also would suggest adding user adjustable scrape time periods at some point, as i have previous mentioned, some of these sources need to be refreshed very often (i have all my current sources using other methods set to 1hr in channels) some fast sources only have a few hours of guide data, and having a 1 days rescrape is not going to be good.

Guide data, in my previous comparing, as i mentioned already, i am seeing differences and some minor issues overall, when compared to already mature options in place (for the ones i use for Pluto, Plex, Samsung) Xumo still is pretty much unusable. I see comments that Guide data things to be worked on still. Which is good.

Again, just my initial quick experience with this software of yours.

It really is impressive what you have gotten so far.

Looking forward to seeing it refined and working the bugs out.